UX & UI: Embedded User Interface

Ergotron is developing a new medical device with an embedded system that is part of the IoT. Nurses will use this device during a shift to help them care for patients in hospitals and nursing homes. The medical device has a 4.3 inch color LCD touchscreen interface. This touchscreen interface operates certain features on the device related to nursing tasks. It removes battery anxiety, reduces cognitive load with an innovative, seamless user interface.

My role was to research, design the user experience, and test all interfaces for this embedded UI. I also created all visual design stages from concept to final hand off to engineering. I collaborated with product management and engineering to help launch this product.

Services

Discover

Research

Gaining an understanding of the nurse in their environment was the first step. I looked over research findings the team had gathered through visits to hospitals in the U.S. and Europe. I watched YouTube videos about topics related to nurses. Topics of interest were how nurses worked during a shift and medical administration. I also spoke to nurses in my personal network.

This preliminary step provided valuable insights from which to start sketching ideas.

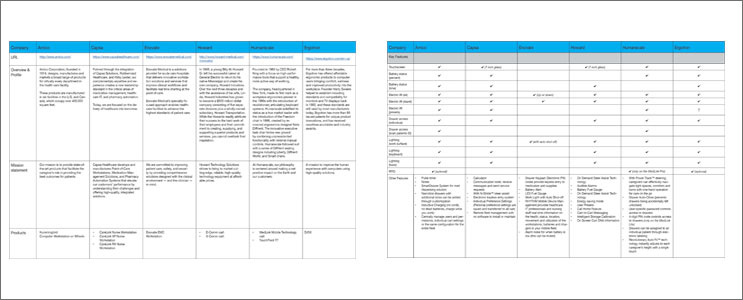

Competitive Analysis

I conducted a competitor analysis to find out where the product currently fit in the market.

In the analysis, I included a details list of features that the top competitors had in comparison. This provided insight about product enhancements that could be added now or in future releases.

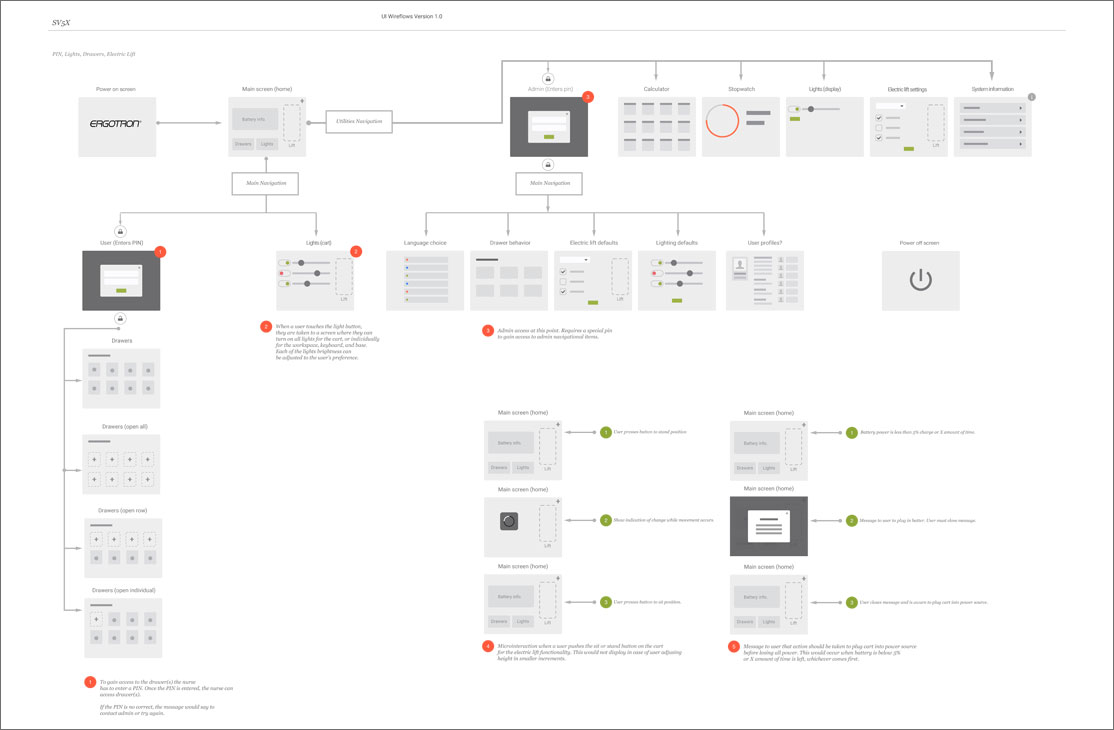

Sitemaps

Before sketching ideas for the interface, I created sitemaps to define the user flow based on:

- Tasks from the perspective of the nurse versus administrator

- Key information from the research findings

- Various cart configurations available to buy

Design

Wireframes

I prefer starting out with sketches to think through the structure of an interface. I used Adobe XD to create wireframes and iterate through the design process.

Prototype & testing

Since the user interface controls a physical device I thought outside the box to complete user testing. I used a variety of tactics taking key issues into consideration:

- Size of the actual touchscreen related to various finger sizes and responsiveness

- Actual data/information on the cart changes on a per minute basis

- Color blindness and legibility of color choices on an embedded UI

- Selections on the touchscreen controlling the physical device

To test the interface with the data/information changes, I used an animated gif with users. This provided valuable feedback and insight into the design. I was able to reiterate and retest the design and then refine.

It was important to test the touchscreen interface based on the actual screen size, and in relation to the physical device. I deployed clickable wireframes to my IPhone which mimicked the size of the UI. I placed the phone on a prototype of the device, and did the user testing with scenarios and a set of tasks to complete. I asked 8 users with various finger sizes to perform a set of tasks through this clickable prototype. Testing identified problem areas to reiterate, retest and then refine.